Where AR Stands Today—Interpreting the Current State Through a Web-Lens After Attending AWE 2025 Around the World

(For the Japanese version, please refer to the link below.)

I am Namura, the CEO of servithink co.,ltd. At Servithink, we have been conducting research and development (R&D) in the XR field since 2024. The foundation for these activities can be traced back to a blog post I wrote in 2018 titled, “Servithink’s Perspective on the End of the Smartphone and the Future of the Next Digital Experience.”

As part of these efforts, we actively conducted overseas research visits throughout 2025. In particular, AWE (Augmented World Expo) is the world’s largest conference dedicated to XR, and it is held annually across three major regions: the United States, Europe, and Asia.

Servithink participated in all of the 2025 events, with the final one taking place in Singapore in February 2026.

Our R&D lead, Fujiwara, has shared insights from “AWE Asia 2026” in an article titled “The Future of XR as Seen at AWE Asia 2026 in Singapore.” I highly encourage you to read it as well.

With that context in mind, I would like to provide an overall recap of this year’s activities.

Rereading the AWE Conference Not as a “Technology Trend,” but as a Shift in Design Constraints

attended the XR conference “AWE Asia 2026,” held in Singapore, and sat in on a wide range of sessions covering AR, smart glasses, spatial UI, generative AI, and more.

The themes were diverse, and at first glance, the event appeared to showcase the “cutting edge” of XR. However, what left a strong impression on me was that many sessions devoted more time not to discussing how to create new experiences, but to examining where the design assumptions we once took for granted are beginning to break down.

In that sense, it was far more than just a presentation of the latest information. Numerous thought-provoking issues were raised, and rather than focusing on novel experiences, it became clear that XR is steadily moving toward a stage centered on real-world deployment and operation.

Hardware in the XR industry is undoubtedly evolving.

I was strongly reminded of this at CES 2026, held in the United States this January. (For a report on the state of smart glasses at CES 2026, please see “The Current State of Smart Glasses as Seen at CES 2026.”)

Metaの大本命ARデバイス「Orion」

As many of you already know, new input methods have been emerging—for example, the wristband-style Meta Neural Band adopted for Meta Ray-Ban, and the ring-style device called “R1” used with Even Realities’ “Even G2.” At the same time, generative AI has dramatically accelerated the speed of producing content and other materials.

Despite that, the overall mood at the venue wasn’t optimistic in the sense of “we have more freedom now.” Instead, it had a very pragmatic tone: the constraints we need to protect—and how to protect them—have become clearer. I felt something similar at UnitedXR Europe 2025, held in Belgium in December 2025 as well. (For an on-site report from UnitedXR Europe 2025, please see “XR Through a European Lens: What We Felt at UnitedXR Europe 2025.”)

This blog post is not intended to be:

- Product Announcement Summary

- Overview of Technology Trends

- Collection of Use Cases

from the sessions at AWE Asia 2026.

Instead, the goal is to organize firsthand insights from attending in person—specifically, from the perspective of Servithink as a web production company—about AR spaces, which we see as the next destination for “where web content will be experienced.” In order for AR to be viable in real-world use, we want to clarify what designers are starting to give up, what they are cutting back, and what new assumptions they are beginning to adopt.

In particular, I’d like to summarize how the global XR industry—including AR—is currently framing these topics through the lens of friction points across design, implementation, and operations:

- Why hardware is no longer seen as something that “expands the experience”

- Why hardware-dependent AR experiences don’t scale

- The reality of AR being used as a “redesign of information access”

- Why web-like structures are proving effective in practice

- The design challenges that widgets and UI/UX force us to confront

Also, rather than offering a broad “everything-and-the-kitchen-sink” event recap, this will be a focused summary based on our company’s direction—namely, the convergence of web production and AR.

About AWE asia 2026

“AWE Asia” is an abbreviation for “Augmented World Expo Asia.”

https://www.aweasia.com/

A List of AWE Events Held in 2025

- AWE USA 2025

- UnitedXR Europe 2025(Starting in 2025, “AWE EU” was merged with the international XR forum “Stereopsia Europe” and began operating as a new event called “United XR Europe.”)

- AWE asia 2026

The most recent AWE Asia was held at Singapore Expo from February 3 to February 5, 2026, spanning three days. However, since the first day consisted only of the Opening Reception, the conference itself effectively ran for two full days.

From 9:00 a.m. to 5:00 p.m., a wide variety of sessions were held in rotation across three separate venues.

At Servithink, two of us attended the event: Fujiwara, who leads R&D, and Namura, our CEO. In total, we sat in on around 30 sessions. Naturally, all presentations were delivered in English, and there was no simultaneous interpretation provided, which may make it a relatively high hurdle for Japanese attendees.

Even so, we continue to participate because our primary goals are to obtain firsthand information and, whenever possible, to communicate directly with people on site. In addition, the exhibition floor features real hardware and software demonstrations, offering hands-on experiences that simply cannot be replicated without being there in person. For example, being able to actually try out Spectacles by Snapchat is something unique to events like this.

Hardware evolution is no longer about devices that “expand the experience,” but rather about becoming foundational constraints that shape design.

What became clear through AWE Asia 2026 was that hardware evolution is no longer being discussed as the “main attraction” for making experiences more spectacular.

Rather, what was consistently conveyed was that hardware is no longer something that expands experiential freedom. Instead, it is increasingly becoming a set of constraints that designers must strictly adhere to. The focus is shifting away from one-off, eye-catching experiences toward a more practical direction: how to satisfy those constraints in order to enable sustainable, real-world use going forward.

This is not a discussion about individual hardware specs or performance metrics.

From a hardware perspective, I also attended CES 2026 in January 2026, where I saw a wide range of devices firsthand. What I felt there as well was that even at CES, smart glasses are no longer about unveiling entirely new technologies. Instead, we seem to have reached a stage where the core capabilities are largely established, and the focus has shifted to refining them for integration into mainstream consumer products.

As of 2026, devices such as Meta’s “Meta Ray-Ban” and Even Realities’ “Even G1/R1” feel less like finished products and more like milestones along a roadmap toward better iterations in the future. One could even argue that they are prototypes of the products ordinary consumers will realistically be using two to three years from now.

Returning to AWE Asia 2026, what repeatedly surfaced across sessions was not the question of “What can we do?” but rather, “What should we deliberately prevent users from doing?”—a fundamentally different design judgment.

The assumption that the user’s field of view must not be obstructed.

In environments built around smart glasses and wearable AI, the very idea of “constantly displaying information” is now being treated as a problem in itself.

Full-screen displays can no longer be assumed as the default, and always-on visuals can cause fatigue and distraction. In fact, there is a shared understanding that the longer information remains in the user’s field of view, the more the overall experience value may decline.

At our company, we make an effort to acquire and test as many smart glasses as possible. As of February 16, 2026 (Reiwa 8), we consider Even Realities’ “Even G2” to be the most advanced in the category of glasses-style devices. Its display is monochromatic green, yet even so, when left on continuously, the cognitive load becomes significantly high. For that reason, the Even G2 allows users to easily toggle the display on and off via head tilt gestures or through the ring-type controller, the “Even R1.” Similarly, with Meta’s “Meta Ray-Ban,” users can attach a wristband-style controller and toggle the display with a double tap of the thumb and middle finger.

In other words, screen visibility is expected to be “brief,” “intermittent,” and “only at necessary moments”—not as a matter of UX preference, but as a core design requirement.

This suggests that the conventional mindset—“if we can display more, we should show more”—does not hold true in the context of AR and smart glasses.

Initially, I believed that because PCs are not easily portable, and smartphones cannot significantly increase their screen size (since doing so moves into tablet territory and reduces portability), digital expression would naturally migrate into “space = AR,” which can function as a massive virtual screen.

However, precisely because AR content can exist constantly within the user’s field of view, the challenge is not simply to increase what is shown, but to carefully determine what should be shown—and what should not.

A design decision that does not assume active user interaction.

Equally important is the idea of not assuming active human interaction as a baseline.

While smart glasses now support a growing range of input methods—such as voice, gaze, gestures, proximity-based controls, and wearable inputs—these modalities share common constraints: they are not always highly precise, they are not well suited for continuous interaction, and they are prone to unintended inputs.

As a result, in UI design, decisions such as “do not place small interactive targets,” “do not require precise positional input,” and “do not create multi-step interaction flows” were treated not as recommendations, but as baseline conditions.

The evolution of hardware is confronting designers not with the idea that “we can now enable new interactions,” but rather that “the conventional assumptions about interaction no longer apply.”

This suggests that when web content begins to be displayed within AR environments, an entirely different UI design philosophy will be required compared to what we have relied on until now.

Accessibility is not an “ideology,” but a condition for avoiding failure.

What stood out to me was that “accessibility” was not discussed as an ideology.

Rather than being framed in the conventional context of “for the elderly” or “for people with disabilities,” accessibility was treated as a design condition for avoiding failure-prone experiences—even for able-bodied users operating AR devices.

Improving visibility. Reducing the amount of information. Minimizing the number of interactions.

These are not matters of “kindness.” They are highly pragmatic decisions—and increasingly mandatory conditions—aimed at preventing breakdowns in the user experience, such as operational errors, hesitation due to confusion, or interruptions that derail the experience entirely.

Importantly, this way of thinking structurally aligns with long-standing discussions around web accessibility in frameworks such as WCAG and Japan’s web accessibility standard, JIS X 8341. The core design principles debated for years—reducing cognitive load, preventing user confusion, and simplifying interaction—are fundamentally consistent with what is now being demanded in AR environments.

Why Hardware-Dependent AR Experiences Don’t Scale

At AWE Asia, there were indeed case studies presented that demonstrated successful applications of AR—such as AR mirrors, event-based AR activations, and large-scale AR concepts involving vehicles and cities (digital twins).

However, when closely examining the firsthand information presented at this conference, it becomes clear that these successes are not built on assumptions of reproducibility or scalability.

The issue is not AR’s expressive power or technical sophistication. The issue lies in the fact that the structural conditions under which AR currently succeeds are inherently limited.

The more successful the case study, the more tightly constrained the conditions tend to be: the location is fixed, the purpose is simple, the experience duration is short, and repeat usage is not assumed. Under those circumstances, rough UI edges, fluctuations in tracking accuracy, and instability in input mechanisms are less likely to surface. In other words, many “successful” cases first establish conditions and contexts in which problems are unlikely to occur.

However, the moment we shift the premise to daily use—something users return to repeatedly, something embedded into work or everyday life—design flaws quickly become visible.

One extra step in an interaction. A slight delay in response. Occasional tracking errors. Difficulty adjusting information density.

These may be tolerable in one-off experiences, but in everyday use, they immediately become reasons the product is abandoned.

Similarly, future-oriented visions such as in-vehicle AR or city-scale AR are technically compelling. Yet beyond the technology itself, they face pre-content challenges: responsibility for updating content, ensuring the accuracy of displayed information, adapting to environmental changes, and accounting for contextual differences among users. These are not problems that will naturally resolve as technology advances—they are issues of information architecture and operational design.

At the same time, it could be argued that AR content, having first been adopted in relatively localized and less conspicuous contexts for general users, is now gradually entering a new phase—one where the central question is no longer “Can this be done?” but rather, “How can this become something people use as a matter of course?”

AR is becoming not a technology that changes how things are expressed, but one that restructures how information is accessed.

What was repeatedly emphasized at this conference was the reality that AR can no longer stand on its own as merely a matter of “displaying things in 3D” or “overlaying content onto physical space.”

In many practical use cases, AR’s role is not to change how information is presented.

Rather, it is to restructure the very pathway through which users reach information.

A symbolic example can be found in AR applications for manuals and guided instructions.

Traditionally, users were expected to engage in a process of “search and exploration”: locate a search bar, enter keywords, choose from a list of results, and then read through a page.

By contrast, in AR-based implementations, the act of simply pointing a camera at an object and having it recognized effectively serves as both search and navigation at once.

In this flow, users are not required to “search,” “choose,” or “read” in the conventional sense.

Information is automatically surfaced based on context and the object at hand. This is not merely a change in UI—it is a transformation in the structure of information access itself. What used to rely on language-based search mechanisms is, in the AR paradigm, shifting toward a model where camera-equipped AR devices are used in everyday life, fundamentally altering the means of reaching information.

At that point, the key question becomes: within the medium of the web, what does “search” mean? And on the website side—the destination being reached—what kinds of information will need to be published and structured on web servers to accommodate this new mode of access? I believe this will become a major point of contention going forward.

Why Web Content Will Continue to Remain Relevant in the AR Era

Throughout the conference, there was little explicit advocacy for “using the web” or “integrating the web and AR.”

As someone whose profession is web production, I found that somewhat disappointing. However, when I organized the discussions around design assumptions and implementation structures presented at the conference, I realized that many of them overlap with principles the web has long taken for granted. In fact, when considering the need for continuously updating information, it seems inevitable that we arrive at web-based technologies.

AR experiences cannot succeed as one-off creations. Usage environments change. Input methods evolve. Display conditions shift. With generative AI accelerating production speed, it is now assumed that experiences will be rebuilt, replaced, and reconfigured on an ongoing basis.

This way of thinking is not unique to AR—it aligns closely with the operational model the web has embraced from the beginning. Conversely, the AR world still largely operates through highly specialized, purpose-built applications. To use an older analogy, it resembles the era when standalone word processors were considered complete systems in themselves. We may now be at an early transitional phase—similar to the shift from dedicated word processors to software running on general-purpose PCs, and eventually to web-based tools such as Google Docs.

In that sense, as mentioned earlier, professionals who can handle upstream design—information architecture and UI/user experience design in web development—will likely retain a significant advantage moving forward.

Widgets Are Not Just a UI Concept — They Represent the Design and Lifecycle Management of “Experience Units” in the AR Era

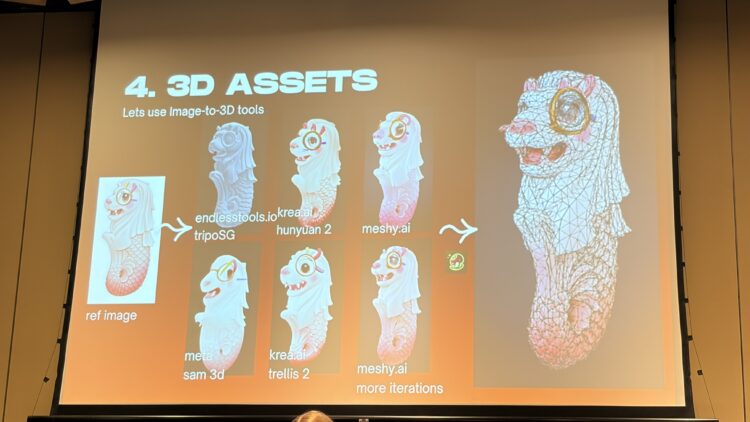

There were also sessions that focused on the concept of “widgets.” This was not merely about extracting UI components.

Rather, it can be understood as a question of experience lifecycle design: at what scale should XR experiences be modularized, how long should they exist within XR space, and how should they disappear?

From the perspective that AR experiences become difficult to use when they occupy too much of the user’s field of view, experiences that are “too large” tend to fail. Larger experiences increase display duration, require more interaction, and become intrusive when the user’s context changes.

At the AWE Asia exhibition, I was able to see a product from a smart glasses lens manufacturer that offered Full HD resolution (1920 × 1080 pixels). Visually, it felt as though nearly 80% of my field of view was covered by a monitor. The color reproduction was bright and the image density was high. However, I also found myself thinking, “At this point, I can barely see what’s in front of me.”

For that reason, AR experiences at the present stage need to satisfy certain conditions: single-purpose use, short duration, and strong contextual dependence. Widgets, in this sense, are not bundles of information but fragments of state—designed to move the user’s judgment forward by just one step.

Instead of displaying everything, they allow users to dive deeper only when necessary. This layered structure enables AR experiences to withstand real-world usage conditions. That said, this too is a transitional phase. At present, progress is happening rapidly, and many are still exploring the practical limits of what users can realistically handle within an AR experience today.

UI/UX is no longer a question of “how to present,” but of how to make it work under specific input constraints.

When considering UI/UX for AR and smart glasses, the most critical premise is ensuring that the experience works in an environment where input cannot be fully trusted.

Although the number of input methods has increased, their precision has not improved beyond a certain point. Gaze does not remain fixed, hands tremble, and input distance varies. As a result, many of the assumptions that held true for 2D UI environments no longer apply in AR.

At Servithink, we also anticipate that input methods will become the primary bottleneck for AR. Meta Ray-Ban has enabled English text input through the band-type “Meta Neural Band.” However, it is difficult to imagine accurately recognizing handwritten kanji characters drawn in midair. (Chinese characters would likely be even more challenging—especially complex ones such as “𰻞𰻞麺.”)

As a result, UI/UX is shifting away from requiring users to perform explicit operations and toward systems in which the hardware and software actively support user understanding. This trend will likely intensify going forward.

Reducing UI is not a matter of aesthetics—it is a design decision made to prevent failure.

AR is no longer a technology for creating “new experiences,” but an environment that raises the level of design complexity by one stage.

What becomes clear from the discussion so far is that AR and smart glasses are not emerging as highly flexible new frontiers for designers.

On the contrary, AR is taking shape as a high-difficulty design environment—one that simultaneously confronts designers with conditions such as limited usable visual space, unreliable input, constantly shifting context, and the assumption of ongoing updates and operations.

As a result, rather than prioritizing flashy visuals or deeply immersive experiences, designers are increasingly choosing more restrained but resilient approaches: breaking experiences into smaller units, reducing information density, minimizing required user interaction, and shifting situational judgment to the system side. These decisions may appear modest, but they are less prone to failure.

That said, this too is likely a transitional phase. Five years from now, as hardware continues to evolve, the fundamental thinking around UI design may change significantly. At the same time, the notion that “context is constantly changing” is something we as web creators have long grappled with when producing content in the emerging AI era. I believe there is meaningful opportunity in that overlap.

AR is not a technology for creating entirely new worlds.

Rather, it expands the scope of what must be designed and increases the weight of design responsibility.

How we confront this reality will shape the quality of future AR content—and digital experiences as a whole, including the web.

At Servithink, we will continue exploring the possibilities of “Web × AR” and sharing our findings on this blog. We hope you will continue to follow our work.

Webサイト・システムの

お悩みがある方は

お気軽にご相談ください

出張またはWeb会議にて、貴社Webサイトの改善すべき点や

ご相談事項に無料で回答いたします。